Brands increasingly use artificial intelligence (AI) and natural language processing (NLP) technologies to moderate user-generated content (UGC). AI typically learns from UGC uploaded to a website and identifies potential violations of a brand’s policies, such as copyright infringement and anti-spam policies. Then, AI sends the flagged content to human moderators for review. That way, brands can filter a significant volume of UGC and focus their resources on more critical content management issues.

This article discusses automated content moderation and how brands use AI to moderate text and multimedia UGC. We’ll also provide some examples of AI-powered content moderation tools.

What Is Automated Content Moderation?

Before diving into how AI helps brands manage UGC, let’s consider what content moderation automation entails.

The term “content moderation” describes identifying and updating user-generated content after it has been published on a website, such as a company’s site, online store, or social account. In recent years, predictive algorithms based on NLP and machine learning (ML) have made it easier for digital marketers to moderate UGC and automate this process.

In a nutshell, AI-powered content moderation (sometimes called “content review”) is an automated process of screening, classifying, and editing user-generated text and multimedia content published on a website. This activity can range from simple tasks like approving or deleting comments on a product’s landing page to more complex functions like evaluating social posts for compliance with legal regulations.

Automated content moderation isn’t limited to digital marketing AI. It’s crucial to evaluate, monitor, and update any type of user content published on the web.

For example, online retailers often use content moderation tools to verify user-contributed comments and remove inappropriate or harmful messages. In addition, some companies use content moderation AI to screen job applications, social media posts, and online reviews on their websites.

Social networks like LinkedIn and Facebook actively use their proprietary ML algorithms to automate content moderation processes. For example, Facebook’s crawling bots and intelligent chatbots have been trained to detect user content that violates the guidelines and automatically remove or block inappropriate responses.

What Is User-Generated Content?

UGC is any kind of text or multimedia content left by a human user on a web page or provided to a business through an application.

For example, an Amazon customer may post a comment on a product review on amazon.com. The online retailer has implemented dozens of complex NLP algorithms and deep learning tools to automatically identify and remove inappropriate comments that violate Amazon’s policies, such as spam, insults, and offensive content.

Brands also use machine learning and NLP to categorize UGC for management purposes. For instance, a brand may create a set of rules for user-submitted comments and use those rules to automatically tag messages as spam or abuse, thereby enforcing those guidelines across the organization.

Similarly, a large food company may use machine learning to identify consumer complaints about the quality of their products, which then triggers a manual review of these cases by team members who double-check the claims.

Here are examples of the controversial and toxic UGC types AI systems detect and moderate:

- Mild and severe swearing

- Sexual content

- LGBTQ slurs

- Viral and promotional events

- Political references

- Racism

- Maltreatment and violence

- Spam

- War and terrorism

- Drug references

- Antireligion

Benefits of Content Moderation Automation AI

AI-powered automated content moderation is a convenient way to minimize marketing costs and maximize efficiency. It also ensures the safety and integrity of all user-generated content, thus raising brand trust.

With 4.62 people around the globe actively using social media, businesses are constantly looking for ways to manage and moderate the increasing volume of user-generated content published on their digital assets.

A recent study by the social media management platform Sprinklr found that one out of three companies in the United States plans to implement AI-powered content moderation technologies in 2023. And by 2025, 80% of American companies will use AI for their digital content management.

Below, we look into some benefits of AI-powered automated content moderation.

1. Automated Content Review Increases Efficiency

With the help of AI-powered UGC moderation solutions, brands can screen and approve thousands of comments, social media posts, and other user interactions in mere minutes. Automation makes the moderation process faster and more efficient, translating into time and money savings for the business.

2. Controlled Brand Experience

AI to moderate online UGC streamlines brand experiences and ensures consistent company messages across various channels. In addition, algorithms can analyze and filter negative comments and reviews, which makes it easier to maintain a positive reputation.

3. Real-Time Monitoring

AI algorithms can screen and remove inappropriate content in real-time, without human interaction, thus ensuring brand safety.

4. Content Engagement

The tools and services we have studied can help content managers engage with users deeper by analyzing customer sentiments and creating personal chatbots designed to answer customers’ queries and resolve issues.

5. More Sales and Repeat Visitors

Automated content reviews can help companies increase sales by making it easier to convert visitors into customers. For example, spending more time on a website might make you a repeat visitor.

Besides, marketers use AI-powered content moderation systems to analyze and optimize user experiences. For example, a brand can evaluate how many images are posted on its social media and compare this data with other brands to gauge the effectiveness of its social media profile.

Content Moderation Stages

In practice, AI-powered automated content moderation consists of three main stages:

- Content classification. Classification is the first step in the process of moderating UGC. A text classification algorithm applies a set of rules to a data set, such as a comment written by a customer. The data may be in the form of words, terms, or phrases. There are many different kinds of text classification algorithms, including the following:

- Content analysis. After the data is classified, marketers can analyze it to identify violations of a brand’s terms and conditions. For example, a content analysis algorithm will assess whether a user-contributed comment violates the rules of use for a specific website.

- Content evaluation. After an algorithm identifies a potential violation, marketers can evaluate the flagged content using human moderation. Brands often use human experts to verify whether or not flagged UGC violates the company’s terms of service or other policies.

- Content approval and removal. Marketers can remove or approve flagged content or leave it online if they need to modify it further. For example, a brand may allow a certain amount of negative feedback on its website, but only under certain conditions.

To sum up, content moderation is applying a set of rules (NLP and machine-learning algorithms) to scanned UGC and verifying whether or not that content violates specific policies. The system sets up to automatically remove or update any inappropriate content identified in the content moderation process. Human moderators oversee and approve or reject flagged content.

Content Moderation AI Tools

AI-enabled content moderation software automates the moderation process across UGC channels and sites. In addition, these systems have advanced NLP and ML capabilities, which help identify potential violations, parse comments, determine appropriate filters, and create customized responses.

Here are some of the best content moderation AI tools in 2022:

1. WebPurify AI-based Image Moderation

WebPurify automates reviewing and removing inappropriate UGC image content from websites and social media. The platform was designed to achieve real-time results. For example, it can automatically screen and approve an image before it gets published on social media, landing pages, or chat channels.

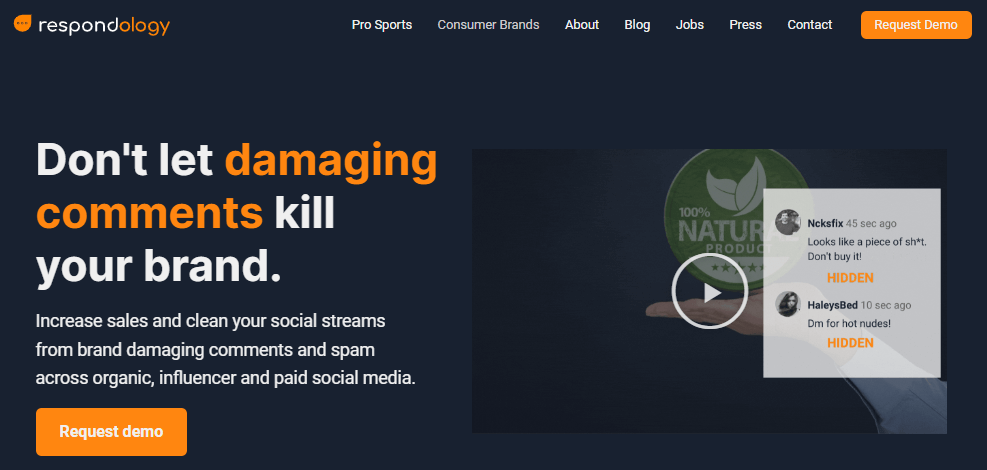

2. Respondology Social Media Moderation

Respondology screens user comments and posts on social media and flags toxic, damaging, and spam content, helping brands avoid digital backlash and promote a positive public image on Facebook, Instagram, Twitter, and other networks.

3. Alibaba Cloud

It is a comprehensive solution that leverages Alibaba’s neural networks and Big Data analysis to monitor and moderate various text and multimedia content. As a result, Alibaba Cloud is one of the industry’s most accurate and fastest automated content moderation tools, with a response time below 0.1 seconds and an accuracy rate above 95 percent.

4. Implio by Besedo

Besedo’s Implio offers an AI- and human-powered tool for moderating UGC on websites, apps, and social platforms. This hybrid solution has customizable filters, highlights suspicious user-generated phrases, and integrates with almost any website CSM, social networks, and mobile apps. Implio also replies on human moderation as part of the process and provides content insights and analytics for marketers who want to improve their brand reputation online.

5. Zesty CMS

Zesty is a cloud-based hybrid CMS solution that leverages AI algorithms to moderate visual and text UGC content in real-time and to avoid potential PR disasters.

Final Thoughts

In the future, companies will have to do more to curb negativity and false rumors on their websites and social networks.

However, with the help of automated content moderation tools, brands can hire fewer moderators and increase uptime while saving time that would otherwise be spent on administrative tasks.

From the above article, it is clear that AI-powered content moderation will play a massive role in how brands communicate with their customers. With this information, marketers can create more efficient and intelligent digital campaigns that will give them an edge over competitors.